Faces are funny things. They are inordinately plastic and expressive, constantly changing according to our health, attitudes and moods—brightening and darkening, lifting and drooping, opening and closing—and always, inexorably in the process of ageing, whatever makeup or makeover manoeuvres we try to pull off.

Despite this plasticity, we are said to be able to recognise faces intuitively, calling on our hard-wired, animalistic and perhaps atavistic ability to hone in on the generic (age, race, sex etc.) and ultimately specific identity of whoever is in our sights. Not surprisingly, scientists and technologists attempt to grasp this ability, tending to regard it as something biologically determined and universal, while others highlight significant cultural differences and the existence of particular ways of seeing. The science and technology of face recognition generates automated systems that allegedly not only match, but supersede human ability, and at the same time, it elides ways of seeing that were established during the industrial revolution in Western Europe.

Facial recognition technology (FRT) is a form of biometrics that, along with iris scanning and fingerprinting, adheres to the principle of indexicality, namely, the objective representation or even symbolic presence of the object – finger, iris, face – in an image. In this case, the image is twice removed from the object; a photograph of a photograph if you like, or a further digitisation of a digital image of a face. The aim of a facial recognition system is to either verify or identify someone from a still or video image. Following the acquisition of this ‘probe’ image, the system must first of all detect the face or distinguish between the face and its surroundings (easy for us, but not for computers). To do this it selects certain landmark features, such as the shape of the eyes and size of the nose, in order to compare them with the database. Either that or it generates what are called standard feature templates – averages or types. Once detected, the face is normalised or rather, the image is standardised with respect to lighting, format, pose and so on. Again, this aids comparison with the database. However, the normalisation algorithm is only capable of compensating for slight variations, and so the probe image must already be ‘as close as possible to a standardized face’. In order to facilitate face recognition, the already standardised image is translated and transformed into a simplified mathematical representation called a biometric template. The trick, in this process of reductive computation, is to retain enough information to distinguish one template from another and thereby reduce the risk of creating ‘biometric doubles’.

[ms-protect-content id=”8224, 8225″]

According to the manufacturers and promoters of face recognition systems, the complex sequence of technical operations and transformations performed on the face image (in a context where the distinction between face and image is first presumed – to allow for the possibility of representation – and then denied) does nothing to undermine the objectivity of the process. This is partly because the underlying principle of the system is photographic, and historically, the authority of photography derives not only from its strong claim to indexicality, but from its development and use in the very institutions in which it continues to be deployed. The history of photography as an imaging technology that is inseparable from the disciplinary institutions of the nineteenth century is very well documented. In the context of the industrial revolution in Western Europe, there was a perceived need to cater for and control the newly urbanised masses, to combat and reform the spread of poverty, disease and crime and to render productive an unproductive population of the sick, the mad and the bad. A new police force emerged – alongside hospitals, schools, asylums and workhouses – and it was here that Alphonse Bertillon developed the first criminal identification system using photography in conjunction with anthropometric and statistical methods. Anthropometrics are broadly equivalent to contemporary biometrics, and Bertillon took eleven measurements of each individual criminal’s body, recording them on index cards alongside frontal and profile portraits and a brief verbal description of distinguishing features. As his archive of the portrait parlé built up, he needed to organise it, which he did by incorporating the concept of the average man. Statistically, the average or mean could be expressed using the bell-shaped curve, but as Alan Sekula points out, it was also conflated with normality and the social ideal while difference from the mean was similarly conflated with deviance. Moreover, this slippage from a purely statistical to a discriminatory social law of averages was backed up by a climate of belief in the quasi-sciences of physiognomy and phrenology. While phrenology was concerned with correspondences between skull topography and localised mental faculties, physiognomy assigned essential characteristics to the arrangement of facial features so that a narrow forehand, for example, was taken as a sign of low intelligence. More generally, in his chapter on criminal physiognomy, Havelock Ellis wrote that beautiful faces ‘are rarely found among criminals. The prejudice against the ugly and also against the deformed is not without sound foundation’.

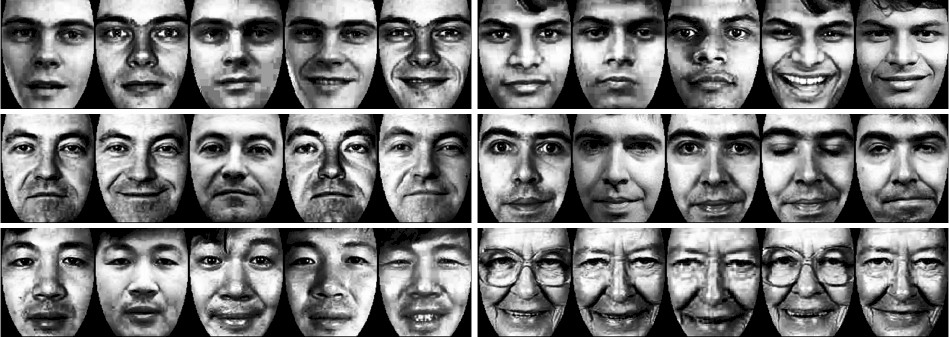

Sekula is clear about the central if problematic role of photography in generating this huge and ignoble archive of the nineteenth century. Photography operated as an effective mechanism of surveillance, recording, normalisation and social control but could not alone secure the identities of criminals, requiring instead the addition of verbal, anthropometric and statistical measures. The authority of Bertillon’s criminal identification system rested not on the camera but on a ‘bureaucratic-clerical-statistical system of “intelligence”’. This system has continued to be updated ever since and, despite the so-called shift from analogue to digital imaging, was manifest in the computerised criminal identification systems of the late twentieth century. I have argued elsewhere that these systems, in operation internationally, drew on a database of analogue and digital photographs and were organised and operated according to recognisable principles. The inventor of PhotoFIT (which became the basis of E-FIT and CD-FIT) based his identification system on the establishment of facial norms and on measuring deviance from that norm (fig). He linked facial features with personality, arguing that, for example, a mouth with upturned corners would have a perpetually cheerful owner while ‘a full, fleshy-lipped or loosely moulded mouth in itself suggests a basic general lack of control over emotional urges’. While a traditional photographic album might still be used for recognition or identification purposes (where the suspect was not known to the witness but might be known to the police), computerised systems were used to assist the witness in facial recall. These systems depended on two different forms of coding and on the use of specialist interview techniques. Extracting a face and thereby an identity from the witness’s memory has never been an easy thing to do. Geometric coding involved measuring facial features from images and is still the basis of what happens now in FRT. Syntactic coding used descriptions of faces rather than measurements, and was perhaps more closely related to the sort of observations witnesses make, such as “ he had a long nose” or “she had protruding ears”. The image of the face was built up in quite a painstaking way, feature by feature (fig), and the process was long and arduous, more often than not resulting in witness fatigue – and in failure. The efficacy of these then new computerised systems was questioned at the time, and yet they continued to be produced, pushed through not by technical as much as market forces.

Twenty-first century facial recognition technologies are embedded with this legacy of technically limited and politically problematic – sociobiological – ways of seeing, regardless of any claim to neutrality and improved efficiency. Nevertheless, after the events of 9/11, the demands on these inadequate bureaucratic-clerical-statistical systems of intelligence have increased exponentially. Increasingly, they are required to act retrospectively, not only capturing a face, and thereby an identity from an image but securing us from terrifying, terrorising events that have already taken place – and could therefore take place again. Associated with the development of CCTV cameras installed, arguably, to protect property rather than people, these somewhat frustrated and angry calls for a technology that has let us down to time travel on our behalf – to undo bad things that have happened, to make good and prevent bad things from ever happening again – are not new. Characteristic of public responses to the grainy “security” camera images that failed to prevent the abduction and murder of James Bulger in 1993, such calls were heard again, were repeated with a vengeance in the wake of 9/11. Then, the problem of witness fatigue and failure that had marred earlier systems was writ large, so large in fact that the role of the eyewitness has subsequently been marginalised and slowly eliminated from increasingly automated systems. From a policing and intelligence point of view, the terrorist attacks of 9/11 were marked by a major failure, on behalf of airport security staff, to identify Mohammed Atta and his associates who had already been “seen” by the security cameras and who, in two cases, were already “captured” in the US intelligence database. Always the weakest link, from this point onwards the effectiveness of facial recognition has been premised on the elimination of the eyewitness as the main bug in the machine.

It is important to emphasise that automated face recognition systems are not post 9/11 technologies. Their history, as I’ve suggested, is much longer, stretching back to the nineteenth century and, in recent decades, becoming part of what David Lyon describes as the convergence between systems of surveillance and marketing and the spread of neoliberal forms of governance. For me, FRT is also intrinsically connected to the ongoing development and re-branding of the project of ubiquitous computing, understood here as the increased dispersal of networked intelligence and distributed computing into both public and private spaces. Within this particular area of computer science, intelligence is understood as the system’s ability to learn from, and adapt to its users and its environment. There is a principle of technological autonomy in play here, as well as a rhetorical claim to flexibility and user-friendliness. But while flexible and user-friendly intelligent computing, embedded and concealed in our homes and cities, appears to offer us comfort and convenience, a personalised customer service, it also offers an unprecedented level of individual monitoring, tracking and control that threatens to transform human subjects into de-personalised data – and turn us into nothing more than market fodder. In the near future, cameras linked to FRT may well be installed in shopping malls and higher end shops. Their task will be to isolate individual customers and tailor marketing and displays to suit them. Ivor Tossell reports that Intel, the computer chip manufacturer, is working on facial recognition systems that profile customers: ‘a camera mounted on a large LCD screen watches for faces that come within four to six metres. The screen can switch ads depending on the kind of face that walks by.’ Although it was based on measurements of the individual criminal, Bertillon’s system related the individual to the group by establishing both norms and deviants. Contemporary FRT makes the same moves whether the context is institutional or commercial, classifying and segregating individuals into groups and types depending on their appearance as an indicator of behaviour, and evincing a form of biopolitical control that is perhaps more effective, or at least more insidious, for being a bit softer.

Biopolitics is Foucault’s term for the way that power operates at the level of individual and social bodies as well as, and in relation to the state. Crucially, for him, power is not a one-way, top-down, state to subject process caught up exclusively with technologies of domination, but it is also negotiated by means of technologies of the self that are both restrictive and enabling. FRT, incorporating a particular history and tradition of photography, might then be considered to be a technology of domination and of the self, simultaneously limiting and facilitating what individuals can be and do in relation to a set of external norms. Its role is thus governmental, but by no means confined to the institutions of government. With its roots firmly established in the US departments of defence and homeland security (which continue to provide the bulk of research and development funding), FRT is becoming increasingly commercialised and somewhat controversially incorporated into forms of social networking including Facebook and, more hesitantly, Google. Using FRT, Facebook makes automatic tag suggestions to its users (600-800 million worldwide) as they upload photographs of friends. This “service” is activated by default (meaning you have to opt out of it) and generates lucrative volumes of biometric data destined for advertisers, app developers and so on. Since, technically, biometric data can be reconnected with its original owner, privacy concerns are real, but they are also, arguably, overshadowed by the image and infrastructure of total surveillance which significantly out-performs the actual technology. That this continues to be almost ridiculously limited – by everything from poor lighting, viewing angles that are not the standard frontal or profile, obstacles like hair and glasses, low resolution and expressions in excess of the average mug shot – is of little consequence when we consider what the system as a whole is able to produce.

The FRT system as a whole is comprised of technologies and users, images, infrastructure, investment, expectation and belief. It produces faces as quasi-objects, at once detached from, and conflated with bodies that are, in turn, detached from and conflated with identities. These faces are literally re-cognised, re-thought and re-coded as static images that were perhaps falsely divided by Sekula into honorific and repressive categories. It was John Tagg who showed how each category leached into the other, making mug shots of us all. But Sekula was right to argue that the photographic codes established in the nineteenth century would persist. One of the algorithms used in FRT produces images akin to Francis Galton’s (eugenicist) composites by removing extraneous information and decomposing faces into standardised types called eigenfaces (fig). Another creates classes of faces, much like Havelock Ellis did in his physiognomy of criminals (fig). FRT as a system of ‘mass individuation’ still belongs to Bertillon. Outmoded beliefs and prejudices are kept alive by the persistence of institutional ways of seeing that surface in times of crisis – generating, for example, the highly racialised “face of terror” – and submerge into the seemingly innocuous, de-politicised sphere of everyday life where faces are in fact becoming big business, and subjects are re-ordered – and re-order themselves – as data objects for the market.

The image and operation of total surveillance and marketing, under the aegis of neo-liberalism, performs well – much, much better than the technology itself – but is not infallible. It is not even completely possible to remove the bug in the machine (since no technological system is ever truly autonomous and independent of human operators), let alone, as it were, the spanner in the works. Face distortion software, sold commercially, codes for precisely what FRT systems seek to eliminate or, through the use of automated expression analysis, reduce to measurable, standardised templates. It posits precisely the sort of inventive, quirky, elusive identity that haunts FRT – as it has all previous identification systems – as the spectre of system failure. Apple’s Photo Booth is one key example (fig). Designed for the ipad, it is marketed as ‘your very own candid camera’ in which you take snapshots of your self and your friends and render them ‘whacky’ and ‘bizarre’ by stretching, twisting and squeezing the face or by subjecting it to familiar effects including x-ray, thermal imaging and kaleidoscope. But these effects are arguably just that, and it is no coincidence that the advert displays the ‘normal’ face in the centre of the screen, where it is still very much the reference point, the standard, the norm. The marketisation of face distortion is a means of containing it, of keeping the mechanism of facial identification in good working order. Here, as with other biotechnologies, the opportunities for change – in ways of seeing and ways of being – that are opened up by the synthesis of biological and technological systems are flirted with, exploited and quickly foreclosed. However, the safe set menus of commercially available face distortion software are not necessarily adhered to in everyday photographic practices where the faces that are busy becoming business are displayed, as they always have been, in galleries and albums and personal archives that are neither honorific or repressive, but silly and perhaps subversive with posed, playful and sometimes downright ugly expressions that defy their own categorisation.

Finally, what FRT produces most clearly is the ongoing history of photography as an imaging technology increasingly entangled with others, and now part of the project of ubiquitous, “intelligent” and distributed computing. These environmental and always already social systems of computing redefine, in turn, what photography is, even while, by continually distancing and digitising and hybridising it, they turn it into its own elusive identity. As a ubiquitous system, FRT enrols faces as well as photographs at a distance. In public spaces like cities and airports we don’t always know it is happening. In a real sense, we are making up, composing, the systems that re-make us, in ways that are all too familiar, but not wholly inescapable.

[/ms-protect-content]

Published in Photoworks Issue 17, 2011

Commissioned by Photoworks