Lewis Bush discusses Big Data and the rise of big photography.

Previous eras in human history were defined by our use of physical materials. The Stone, Bronze and Iron ages were all shaped by our ancestors ability to fabricate and employ these corporeal resources. Even later ages that were named for more abstract processes were still only made possible by advances in the physical realm. The industrial age for example was really the era of steel and steam, as much as it was the age of capital or free markets. Our present era, the digital or data era, is perhaps the first exception to this, an epoch defined by a resource which is entirely intangible.

The liberation of information from a physical form makes possible the generation and exchange of this resource on an unimaginable scale. In 1986 global data in both physical analogue and electronic digital formats was estimated at less than three exabytes – equivalent to three billion gigabytes. In 2007 that total had grown to around three hundred exabytes, the vast majority of it digital, in other words ephemeral. Since 2012 it has been estimated that around 2.5 exabytes of new information are generated daily. In other words every twenty-four hours we generate almost

as much new information as the sum total of the human knowledge that existed just thirty years ago.

Easy as it is to cooly note these numbers, the quantities of information they represent are so vast that defy any real attempt to make sense of them. In response to this inexplicable scale a new trend in computing has emerged over the past decade. Big Data computing involves the storage and processing of very large data sets, vast quantities of related information of the sort we have only really started to see appear in the age of an internet connected world. Big Data is novel compared to older forms of supercomputing, not just in terms of the huge quantities of information it can sift and resolve, but also for the speed with which it does it, it’s comparatively decentralised architecture, and it’s use of advances in computer algorithms to search for patterns and insights in far more sophisticated ways.

Big Data is not without its critics. Some of its detractors see it as a passing fad, others criticize the fact it is still only vaguely defined as a field, and others still argue that despite the greater scale of information involved, it sheds little new light. For it’s adherents though, Big Data is here to stay, and according to them it has the capacity to revolutionise the way we use information. Indeed some of them would argue it already has, and Big Data has so far been put to use in a wide array of fields. Perhaps the most prominent of these is it’s use in the analysis of the online behaviour of hundreds of millions of people participating in social networks, using this past history to anticipate future interests and actions and allowing advertisers to target them in more specific and accurate ways..

One area that remains still little explored though are the implications of this form of computing for the visual realm. The overwhelming quantity of information that we have to contend with is perhaps nowhere more in evidence than in the field of photography. As many have noted the images we presently generate in a year outnumber all the photographs produced in the previous two centuries of the medium’s existence. The ‘blizzard of images’ that so worried the Weimar German cultural critic Siegfried Kracauer when he wrote about it in 1927 increasingly seems to resemble nothing but a light frost in comparison to our own raging storm of pictures.

The fact of photography’s present super-abundance though is not nearly so interesting as how this torrent affects us, alters our relationship with images, and shapes our understanding of the things they show. The rarity of photographs once played a part in defining the importance of the things they illustrated. The fact that something was worth photographing meant that in some sense that it was worth seeing and knowing about. But in an age when everything is photographed and shared it becomes almost impossible to discern what amongst these countless images is worth paying attention to.

One response to this ocular crisis is to apply the principles of Big Data to visual media, a practice which might perhaps be termed ‘Big Photography’. So far there has been only tentative progress in this area, and little recognition or understanding of even these modest advances and their implications in the broader photographic community.

Progress has been slow in part because of the technical difficulties. Storing the vast numbers of photographs required for a broad analysis has traditionally been prohibitively expensive, but the cost of storage is falling rapidly. Additionally it is interesting to note that many of the companies who are presently leading the way in the use of Big Data, for example Facebook, Yahoo, and Google, are all already sitting on enormous caches of photographs accumulated through other areas of their business activities. Consider that these companies are constantly seeking new ways to monetise what they do, their joining together these two presently unrelated areas of activity seems like only a matter of time.

A bigger issue though has been image analytics itself, the ‘automatic algorithmic extraction and logical analysis of information found in image data’. It is maybe little surprise that as thirty percent of the human brain is taken up with visual processing, since interpreting the visual is resource intensive, complex and subjective. Attempts to teach computers to understand images have been slow, but we are starting to see interesting breakthroughs. Teams at Google and Stanford University for example have recently made strides in teaching computers to analyse and accurately caption photographs. They did this by combining artificial neural networks, groups of algorithms modelled on the neurons of the human brain. Part of the significance of this breakthrough hinges on the ability of these networks to pro-actively learn and develop, becoming more attuned to the subtleties of images as they encounter more and more of them.

The question of ‘how’ seems to be slowly taking care of itself, but another pressing question is ‘why’? What might the consequences be of this sort of analysis of photographs, and could they be broadly positive or negative? As noted, the commercial sector is already leading the way in Big Data, and it is not difficult to see how a company like Facebook might benefit from the ability to analyse the huge numbers of photographs that are uploaded each day to it’s servers. As we become increasingly reticent about sharing aspects of our personal information online, companies might turn to the photographs we upload as a way to extract information about our interests and habits. The power of Big Data computing in part also lies in the cross comparison of data, and the ability to compare uploaded photographs with other online source of imagery – for example the enormous mass of imagery contained in Google’s Street View platform. Combined together these different visual resources could open up all sorts of possibilities for plotting out a user’s habits, interests and predilections, the implications of which are at best ambiguous.

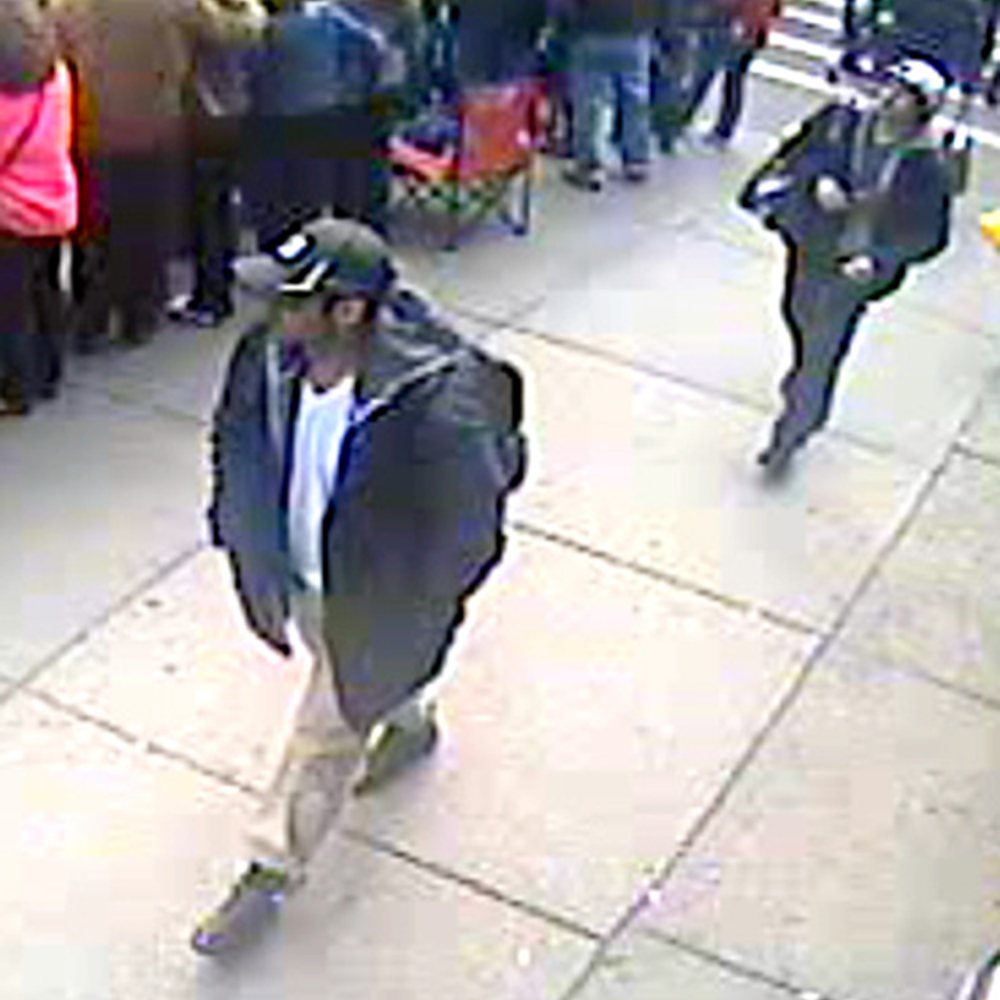

There are also rather more positive applications emerging in the non-commercial sector. One area where this sort of analysis is starting to be employed is in healthcare, where research is leading to algorithms able to process the vastly detailed imagery produced by modern diagnostic machines, and even automatically prescribe further action based on what they find. For an anticipation of another future use, in the aftermath of the 2013 Boston marathon bombings, the police attempted to manually create a three hundred and sixty degree timeline of events, using the 10 terabytes of publically submitted images and video which had been produced in the moments up to and just after the bombs were detonated. As Anssi Männistö has suggested, a future could be at hand where this process could be automated, and where major events are plotted out using the vast quantity of public and semi-public images which are now inevitably produced in their wake. It might even come to be possible to analyse the vast numbers of images that appear on social media in near real time, checking them for potential warning signs of an impending attack. Tools like behaviour mapping and facial recognition are becoming as revolutionary to policing as fingerprinting proved to be a century ago.

However as this last possibility suggests, in the hands of the state these advances could have negative applications as well as positive ones. It is maybe not surprising that major strides in the area of image analytics are being led by the defence industry. Sophisticated modern militaries produce vast quantities of visual information, from spy satellites to helmet cameras, and this tendency is also permeating into the arena of civilian law enforcement with the growing use of body cameras and the ever growing quantity of CCTV surveillance. As one commentator observes, we ‘expect the nation’s defense and security effort to be cost-effective’ with the consequence that we will increasingly ‘move to a smaller but more educated fighting force[s] and at the same time increase the use of remote sensing, observation and monitoring tools’. This will inevitably increase the burden of analysis. Given the recent revelations about the NSA’s surveillance programs it wouldn’t be difficult to imagine ways that this sort of image analysis might be used to trawl vast quantities of online imagery looking for example, for clues to the location of a particular person. The NSA continue to expand their capacity to store data, with the completion last year of a vast new data centre in rural Utah.

As with any advance, those large organisations with the resources to experiment tend to lead the way, with the rest of us caught in their wake, and taking up these innovations later. Even with this being so, in time this sort of analysis could offer possibilities for ordinary citizens to resist and challenge autocratic state or corporate power, as much as it might presently threaten to support it. Early models of decentralised computing like the SETI project (which unites millions of home computers to analyse interstellar radio signals) demonstrate how massive data analysis might be crowd sourced using the distributed computing power of willing volunteers. One day journalists might be able to use similar approaches it to automatically sift and verify images from the growing number of citizen produced images that are taken in the wake of any news event, or to analyse future leaks of the quantities of visual information produced by governments and militaries. The trend of relying on labour intensive manual verification as websites like Egypt’s Da Begad do will eventually be unsustainable.

There will also of course be uses that seem entirely banal. A group of researchers have used a combination of meta-data, image content analysis and comparison to other factors like location and weather to determine whether a tourist landscape photography is a good one or not. Even the fashion industry is starting to pay attention to the possibilities of using Big Data to anticipate future trends, an approach which might one day extend to online image analysis as a way to detect new clothing trends and judge when they have reached their nadir.

In all such uses, positive, negative or indifferent, it also has to be considered that this sort of data analysis, and the algorithms that perform it, are themselves far from neutral. Algorithms can have biases built into them, accidentally or intentionally, and if we come to rely on them to make increasingly important decisions we have to become ever more keenly aware of these prejudices. As Jay Stanley writes, where constant image analysis is based on the idea of searching for deviations from a perceived norm, it could lead to excessive pressures on minorities to conform. We will soon need to move towards a framework for creating ethical algorithms, perhaps not unlike Issac Asimov’s Three Laws of Robotics. We also have to think about how advances in this sort of automated image analysis might have a knock on effect, for example opening the floodgates for ever more pervasive use of CCTV and other technologies of surveillance.

Data then, and particularly visual data, is the defining resource of our era, but it is one which has been perversely hampered by it’s own great quantity. The flood of images is not something which will now abate, however much it might be decried by those who ask us all to photograph less. Instead of shaminstically invoking a less image saturated past we need to look to solutions for the present and recognise that we might at last be on the cusp of technologies which can help us make sense of these enormous changes. As with any innovation, the potential is as much to the good as to the bad. We must decide now how we want these technologies to be used to improve our lives, and vigorously challenge those uses that threaten to undermine them.